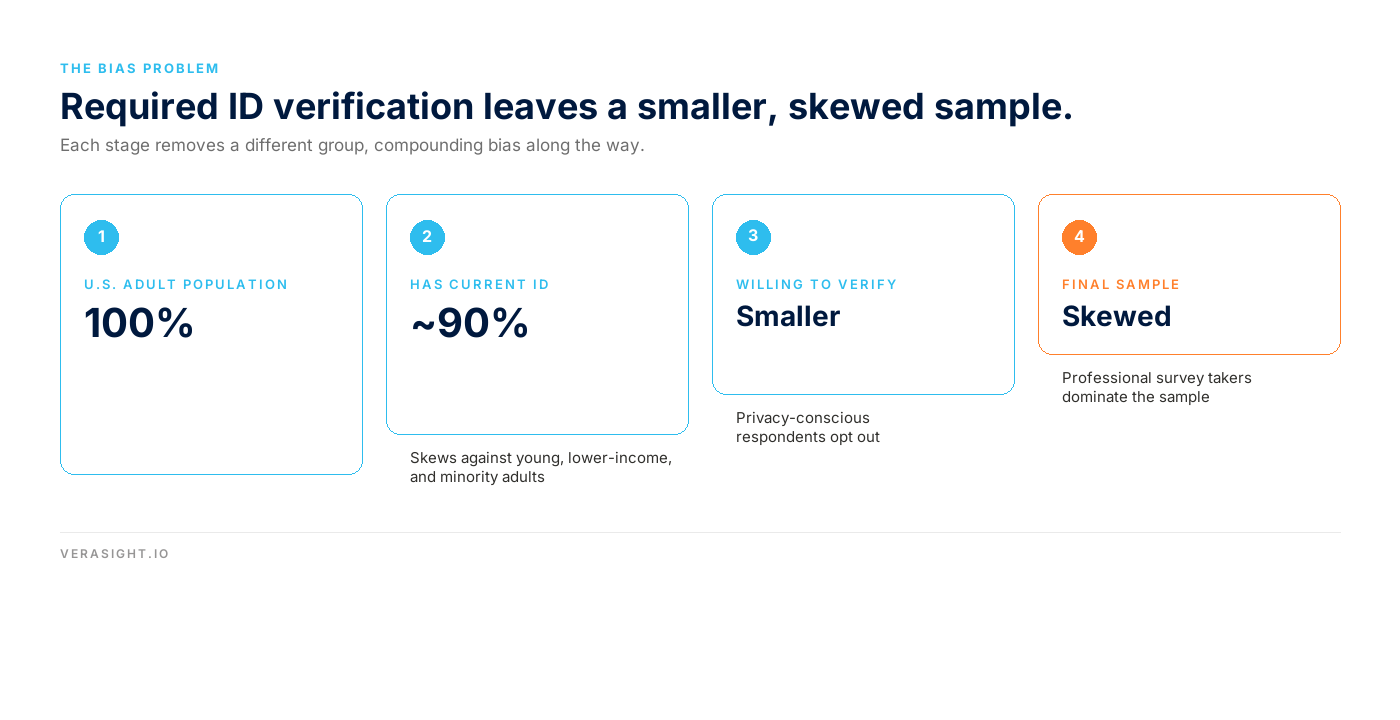

Some survey organizations now require government ID verification from every respondent they interview. Unfortunately, this ID verification harms data quality and biases results.

Government ID verification is straightforward. Before being allowed to take a survey, each person must upload a video of themselves and a picture of their government-issued identification, such as a current driver’s license or passport (expired documents are not allowed). Then a third-party company verifies that the video matches the image in the license or passport. Once verified, these individuals are then invited to take surveys.

Unfortunately, requiring all respondents to upload government-issued IDs produces biased data

Several factors produce this bias. First, those without a current driver’s license or passport are ineligible to take the surveys. This excludes a substantial portion of the population. Almost 10 percent of adults in the U.S. do not have a current driver’s license. Even more concerning, those without IDs are not evenly distributed across society. Young people, those with less income and lower education levels, Black and Hispanic Americans, and individuals with disabilities are all less likely to have current government-issued IDs. The exclusion of these individuals means surveys that require ID verification are necessarily biased. This bias is a major concern for any study that seeks to be representative of the general public as well as any study that seeks to understand these particular segments of the population.

Second, among those with current IDs who are eligible to take surveys, we must ask—who is not willing to upload a video of themselves and to upload an image of their current driver’s license or passport? Anyone who is not comfortable providing a video of themselves and/or providing an image of their current government ID will not be included in the survey sample. This bias is especially concerning for studies related to political attitudes, as trust in organizations and concern with data privacy varies by political party.

Third, we need to consider, who will be most willing to upload their government identification? The answer is “professional survey takers.” Those who view survey taking as a steady stream of income are most willing to agree to government ID verification. One indication of professional survey taking is the percent of respondents who take surveys from a computer. We should expect a survey that is representative of the population to have a sizable portion of the sample taking the survey on their cell phone. Not only do most people keep their cell phone with them throughout the day, but cell phones are convenient for taking a single survey when time allows.

By contrast, we would expect those who supplement their income by taking as many surveys as possible to sit at a computer so the continual survey taking is as comfortable as possible. It’s thus not surprising that a prominent survey organization that requires government ID verification recently reported that 84% of their respondents take surveys on desktops. Beyond not being representative, these professional survey takers introduce other biases, such as learning about issues commonly asked in surveys and learning how to pass attention check questions while skimming through other questions. Furthermore, when individuals take back-to-back surveys, the topics and considerations asked in one survey may influence how these individuals respond in the next survey they take, creating further data quality issues.

These Biases Can Compromise Experimental Findings

Most people conduct surveys because they want to understand a particular group, such as voters, consumers, young people, or even the general public. Biased data means biased conclusions. The biases from requiring ID verification also hurt experimental research. Imagine experimental studies to test what messaging increases support for climate change mitigation or what strategies reduce vaccine hesitancy. The estimated treatment effect might be positively biased because those least trusting of social institutions, who refused to upload their current government ID, are not in the sample. Biased data are just as problematic for experimental studies as for traditional surveys.

Fortunately, government-issued IDs are not necessary to eliminate fraud

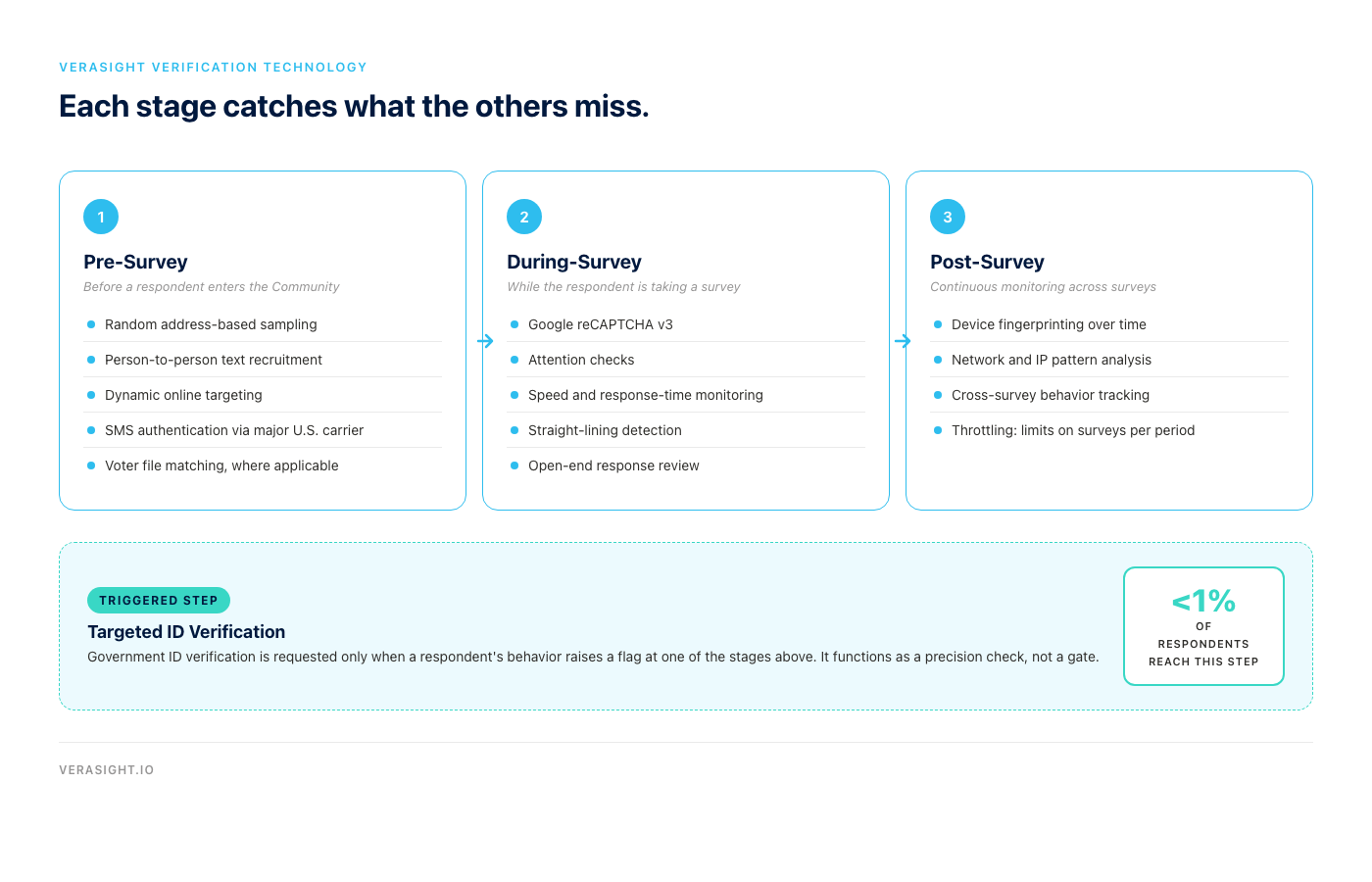

The good news is that it is not necessary to require every respondent to upload a government-issued ID. Verasight has developed numerous other, less intrusive, strategies to verify respondents. These strategies include cell-phone authentication (restricted to major U.S. carriers), sampling from verified addresses and lists such as voter files or commercial files and confirming the respondent matches the person selected from the list, plus numerous with-in and between survey checks.

But don’t just take our word for it. A recent independent study, conducted by prominent researchers and commissioned by Prolific, found almost zero evidence of bots in Verasight data. Further, Verasight’s near-zero evidence of bots was lower than what was detected in a comparable sample of Prolific survey data, and Prolific requires government ID verification of all of their respondents. Government ID verification is not necessary, but it is still an important tool.

The right way to use Government ID Verification

The use of government-issued IDs for respondent verification is still a critical tool for survey research organizations. Like most tools, the key is using it correctly.

Verasight only requires government ID verification when a respondent triggers a data quality flag. Thus, instead of forcing all respondents to submit videos and current government IDs, which creates the bias described above, Verasight only requires ID verification if there is evidence of concern. Here are two examples that would trigger required ID verification.

- If we detect two or three survey respondents taking different surveys from the same tablet or computer, are these family members or roommates or is this a single individual logging on more than once? If family members or roommates, banning the accounts makes the data less representative. If a single individual, this is an instance of fraud that needs to be eliminated. When this happens, we immediately pause the accounts and invite the respondents to submit ID verification, allowing us to assess whether we have observed families/roommates, who will be reinstated, or if we have a fraudulent account, which remains deactivated.

- If we detect multiple users taking multiple surveys from a single IP address, are these random survey takers in a public library or a single individual logging in more than once? Again, we pause the accounts and require ID verification to continue taking surveys.

ID verification is thus a strategy for ensuring that our quality flags do not inadvertently exclude real and sincere survey takers. Of course, not everyone accepts the invitation to submit their government ID. Some individuals were engaging in fraudulent behavior, and they never re-enter our surveys. Others may be honest actors, but may not be willing to submit their ID. But this approach minimizes the above concerns in two ways. First, since we’re only requiring IDs of those with quality flags, the overwhelming majority of Verasight survey respondents are completely unaffected. Second, since we’re requiring IDs of individuals who have already taken at least one Verasight survey, they are familiar with us and much more likely to submit an ID than if they were required to submit an ID before ever taking a single survey.

Verification with Government IDs can be a powerful tool to support data quality and to avoid incorrectly identifying fraudulent behavior. But IDs should not be required of all respondents. Doing so creates biased data, compromising experiments and research.