Survey screeners can be detrimental for data quality. Fortunately, they are no longer necessary.

Oftentimes, surveys start with a “screener,” or a short set of questions designed to determine whether a given individual has the characteristics that the researcher is looking for in their respondents, such as a history of cigarette smoking or having obtained a college degree.

Screeners need to be carefully constructed so that each question is clear, but the objective of the screener is obscured (e.g., to avoid instances where respondents simply answer “yes” to every question in hopes of qualifying for the survey). Savvy survey takers motivated by maximizing rewards may misrepresent themselves on screener questions in order to increase the chances that they will qualify for a given survey. Honest survey takers often get frustrated when they are routed through multiple screeners before qualifying for a survey that will earn them rewards. Even individuals who are otherwise unscrupulously honest may begin to learn over time how they need to respond to screeners in order to meet survey qualifications, save time, and earn rewards.

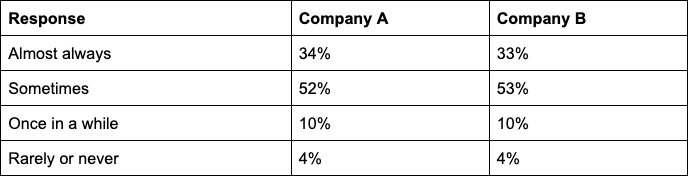

When we asked respondents in samples provided by 2 large, popular sample providers–Company A and Company B–about their experiences with screener questions, we found that respondents across both platforms reported being “screened out” frequently, with about a third of both samples reporting that they are “almost always” told that they do not qualify for a given survey.

About ⅓ of respondents from popular sample providers A and B report feeling that they are “almost always” told they do not meet qualifications for any given survey.

How often are you told that you do not qualify for a given survey?

What is Verasight’s stance on screeners?

At Verasight, we don’t screen out respondents who do not qualify for a survey and we pay respondents regardless of whether or not they qualify. We do this for a couple of reasons:

- We value our panelists’ time and want them to feel secure in answering questions honestly, knowing that they will earn their rewards points no matter what their answers are.

- We care about data quality. As noted above, when respondents are asked screener questions before beginning a survey, they face incentives to misrepresent themselves, they may face fatigue or frustration from failing to qualify for previous surveys, and the screener questions may unduly influence their responses to subsequent survey questions. At Verasight, we want you to feel confident in the knowledge that your survey respondents are providing sincere and accurate responses to your survey questions. If you are interested in surveying a specific group, such as consumers of a particular product, people of a particular age group, or who live in a particular area, we will identify these individuals without filtering people out via screeners. This keeps our panelists engaged and excited to participate in our surveys, and allows us to deliver high quality data to you.

In order to accomplish the goals of both delivering high-quality, specific samples and maintaining an engaged panel, we build extensive profiles of our respondents. By creating and continually refreshing demographic and behavioral profiles of respondents, we can help our customers find the right people from the start, without needing to rely on extensive screeners. By recruiting all respondents directly, instead of outsourcing data collection to third-parties, we are able to build a rich understanding of our community members.Importantly, Verasight clients only pay for the respondents who meet their required criteria.

Curious about whether Verasight is the right sample provider for your next project? Reach out to our team today to learn more.

Survey Details:

Company A:

The Benchmarking Survey B was conducted by Company A from January 20 - January 26, 2023. The sample size is 1,002 respondents. Respondents were recruited from a variety of vendors sampled through the Company A survey sample marketplace.

The data are weighted to match the Current Population Survey on age, race/ethnicity, sex, income, education, region, and metropolitan status, as well as to population benchmarks of partisanship and 2020 presidential vote. The margin of error, which incorporates the design effect due to weighting, is +/- 3.4%.

Company B:

The Benchmarking Survey C was conducted by Verasight from February 9 - February 23, 2023. The sample size is 1,014 respondents. Respondents were recruited from a variety of vendors sampled through the Company B survey sample marketplace.

The data are weighted to match the Current Population Survey on age, race/ethnicity, sex, income, education, region, and metropolitan status, as well as to population benchmarks of partisanship and 2020 presidential vote. The margin of error, which incorporates the design effect due to weighting, is +/- 3.3%.

To ensure data quality, Company B implemented a number of quality assurance procedures. This process included screening out responses corresponding to respondents who failed straight-lining checks, gave nonsensical answers on open-ended questions, and showed patterned responses.